Introduction

Mobile App Testing involves networks, not just the app or device itself. Understanding the basics of telecommunications will always give you advantages when doing testing mobile applications. Testers need to understand the impact of network and communications on testing scope. This is the first in a multi-post segment for understanding the differences between networks, understanding their impacts on testing, and how to create effective tests to both simulate different network failures, and examine critical aspects along multiple network types.

Transmission Control Protocol/Internet Protocol (TCP/IP) is a group, or suite, of networking protocols that is used to connect computers on the Internet. It is also the protocol used for all mobile traffic. TCP and IP are the two main protocols in the suite:

- TCP provides transport functions, ensuring, among other things, that the amount of data received is the same as the amount transmitted

- The IP part of TCP/IP provides the addressing and routing mechanism

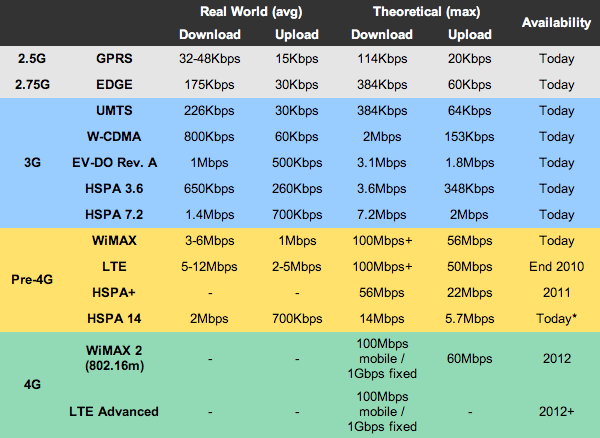

While all mobile traffic uses these protocols, each mobile operator may support multiple network technologies to run this protocol over. The major protocols in the United States are LTE, CDMA, GSM; some use less common or local networking standards such as iDEN, FOMA, and TD-SCDMA. There are more than 400 mobile networks globally, and each network has a unique combination of network infrastructure that tunnels the packet-based protocols used by mobile networks into TCP-IP protocols used by the mobile Web. Each network operator has implemented systems that behave slightly differently from different vendors to perform the required tunneling. The differences in each of these networks can lead to applications that require internet access to behave differently, and so understanding these differences can assist in both troubleshooting, and designing tests to simulate and stress these unique characteristics.

CDMA, GSM, and LTE differences

Due to the vast number of networks available, this post will focus mainly on describing the differences between CDMA, GSM, and LTE.

- CDMA (Code Division Multiple Access) is a “spread spectrum” for cellular networks enabling many more wireless users to share airwaves than alternative technologies.

- GSM (Global System for Mobile Communications) is a wireless technology to describe protocols for cellular networks used by mobile phones with over 80% market share globally.

- LTE (Long Term Evolution) is a wireless broadband technology for communication of high speed data for mobile phones.

The main difference between the technologies is that both CDMA & GSM can support cellular and data, whereas LTE can only support data. The LTE standard supports only packet switching with its all-IP network. This means that if the phone does not have CDMA/GSM technology then the traditional cellular (voice) services would NOT work, only data. This means that analog signals can not be sent, and 1G, and multiple 2G networks are not supported. Voice calls in GSM, UMTS and CDMA2000 are circuit switched, so with the adoption of LTE, carriers will have to re-engineer their voice call network.

CDMA and GSM are both multiple access technologies. They’re ways for people to fit multiple phone calls or Internet connections into one radio channel. There are many multiple access technologies out there, some of the more common ones are:

FDMA (Frequency Division Multple Access

Older analog systems use FDMA, including the Advanced Mobile Phone Service (AMPS), an analog version of cellular phone technology. This multiplexing splits up the data over multiple frequencies, sending a message continuously, over a smaller bandwidth. Once the phone system moved to 2G (digital) FDMA started getting phased out.

GSM (Global System for Mobile Communications)

GSM was the first multiple access technology that ran data over mobile networks. GSM uses time division to multiplex it’s signals. This means each set of data takes it’s turn being sent. Your voice is converted into digital data, which is given a channel and a time slot. Three calls on one channel would look like:

123123123123

On the other end, the receiver listens only to the assigned time slot and pieces the call back together. This requires coordination on both the sending and receiving lines. The pulsing of the time division signal created the notorious “GSM buzz,” a buzzing sound whenever you put a GSM phone near a speaker.

CDMA (Code Division Multiple Access)

CDMA required a bit more processing power, because it’s a “code division” system. Every call’s data is encoded with a unique key, and then the calls are all transmitted at once. If you have calls 1, 2, and 3 in a channel, the channel would just say 555555555555. The receivers each have the unique key to “divide” the combined signal into its individual calls, similar to how encryption works with each side having a public or private key.

Code division turned out to be a more powerful and flexible technology, so “3G GSM” is actually a CDMA technology, called WCDMA (wideband CDMA) or UMTS (Universal Mobile Telephone System). WCDMA requires wider channels than older CDMA systems, as the name implies, but it has more data capacity. The most major difference between CDMA and WCDMA is in the group of technology that it is grouped with. CDMA is a 2G technology and is a direct competitor to GSM, which is the most widely deployed technology. WCDMA is a 3G technology that is often used in tandem with GSM to provide both 2G and 3G capabilities within the same area of coverage. WCDMA and CDMA do not belong to the same line as the 3G technology of CDMA is called EV-DO and is the competitor to WCDMA.

HSPA (High Speed Packet Access)

HSPA extends and improves the performance of 3rd generation mobile telecommunication networks utilizing the WCDMA protocols.

LTE (Long Term Evolution)

It is based on the GSM/EDGE and UMTS/HSPA network technologies, increasing the capacity and speed using a different radio interface together with core network improvements. However, unlike GSM and CMDA it uses OFDM, Orthogonal Frequency Division Multiplex. Orthogonal Frequency Division Multiplex (OFDM) is a form of transmission that uses a large number of close spaced carriers that are modulated with low rate data. Normally these signals would be expected to interfere with each other, but by making the signals orthogonal to each other there is no mutual interference. The data to be transmitted is split across all the carriers to give resilience against selective fading from multi-path effects.

Within the OFDM signal it is possible to choose between three types of modulation for the LTE signal:

- QPSK (= 4QAM) 2 bits per symbol

- 16QAM 4 bits per symbol

- 64QAM 6 bits per symbol

The exact LTE modulation format is chosen depending upon the prevailing conditions. This means that it is important to test over each modulation type.

So What…

So why do you need to know about this, why should you care? Well, understanding how information is encoded and transmitted means that we can better simulate network traffic. Realizing that information travels in different ways also means that errors to the signal (bad S/N ratio, dropped packets, loss of network) means that different parts of data will be lost. Depending on how your application functions, this may mean different things.

Next blog post we will examine how errors within the signal effect each network differently, and the following post will cover how to design tests for these networks to both simulate the data error, and to simulate the network.

One thought to “Why Test On Different Networks”

Hi Max,

First, I would like to appreciate your effort to pitching about “Why Test On Different Networks”.

I totally agree with you. Nowadays, most performance problems caused by network only. So, before developing the app developers keep the points you mentioned in the above. It helps to increase the performance of the apps.

Thanks for sharing…!